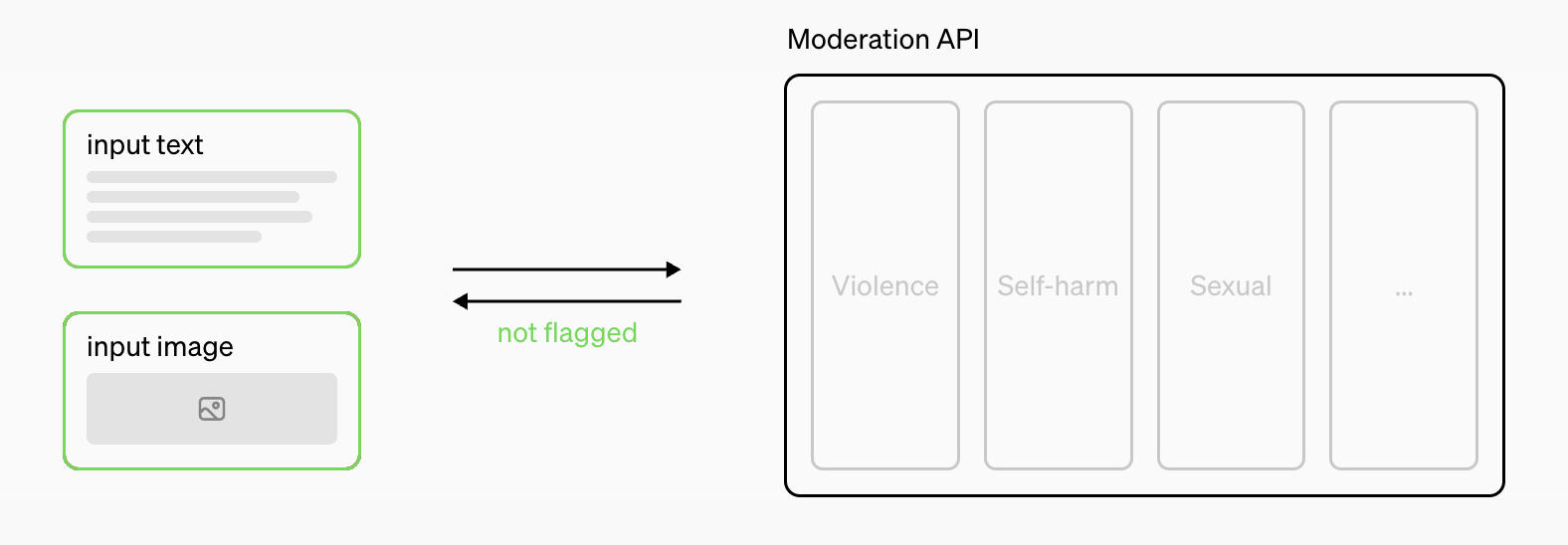

OpenAI has rolled out a significant upgrade to its Moderation API, introducing a multimodal moderation model. This innovation enhances content moderation by analyzing both text and image inputs, ensuring more accurate detection of harmful content such as hate speech, violence, and explicit material. Unlike its predecessors, this model leverages OpenAI’s powerful GPT-4 technology to deliver faster, more efficient, and precise results. The multimodal approach marks a crucial step forward in content moderation by tackling complex challenges presented by diverse forms of media.

Key Features of the New Multimodal Model

1. Text and Image Moderation

The multimodal model stands out for its ability to handle both text and images in one unified system. Previously, moderation models focused largely on one type of input. Now, the system’s ability to interpret and analyze different content types makes it more robust in protecting users from harmful content.

2. Improved Accuracy

This upgraded API delivers better performance across critical categories, such as identifying violent or sexually explicit material. It’s particularly effective in detecting subtle or contextually complex instances of harmful behavior, making it ideal for applications requiring nuanced moderation, such as social media platforms, forums, and community-driven websites.

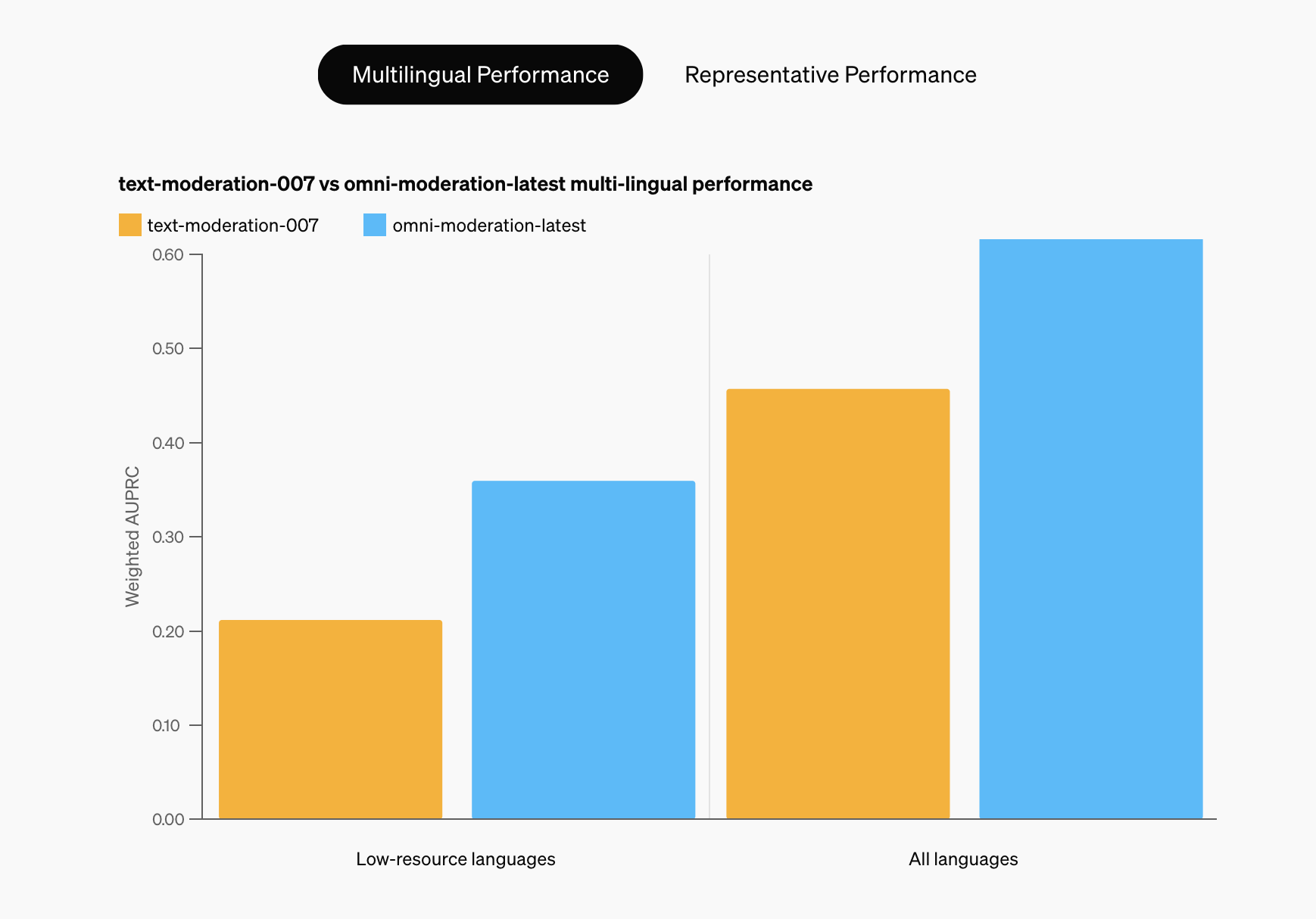

3. Global and Multilingual Support

Another major advantage of the new model is its enhanced ability to moderate non-English content. This opens doors for global applications, ensuring that harmful content is efficiently flagged regardless of the language used. This feature expands the reach of the API to a more diverse user base.

4. Calibrated Scoring for Consistency

OpenAI has introduced calibrated scoring to ensure more consistent content moderation across platforms. This allows developers to fine-tune moderation settings for different categories, balancing between strict moderation and user engagement.

Developer-Friendly and Free Access

The upgraded Moderation API is available for free, allowing developers to easily integrate it into their applications. By providing this advanced tool at no cost, OpenAI aims to encourage widespread adoption, contributing to safer digital ecosystems.

This upgrade reflects OpenAI’s commitment to advancing content moderation technologies, offering scalable solutions for platforms of all sizes. Developers can rely on the API to safeguard their communities from harmful interactions while also streamlining the moderation process.

For more information, you can read the full announcement on OpenAI’s official site here.